Celebrating its two-year anniversary, the Center announces innovative AI acceleration technologies along with nearly tripling its cadre of memberships.

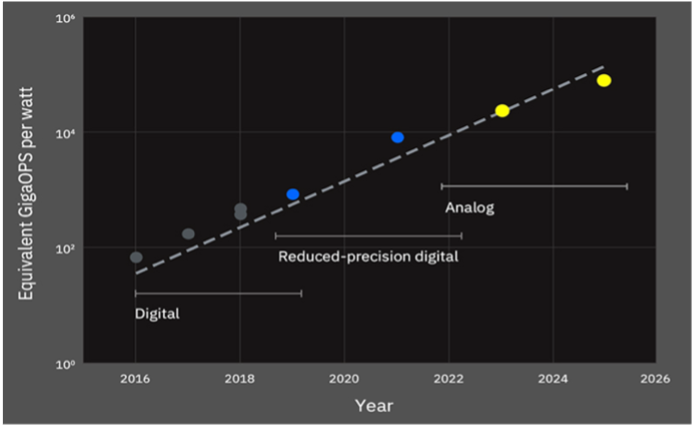

The IBM Research AI Hardware Center is the nexus of a group of academic and industry leaders contributing to the next wave of AI technologies. The Center’s mission is to develop technologies that will deliver 2.5 times annual improvement in AI hardware compute efficiency, attaining a 1000-fold improvement, one of the key components for enabling what IBM terms “Fluid Intelligence”. Recently celebrating the Center’s second anniversary, IBM is tracking to that pace or better, and has nearly tripled the Center’s membership roster of companies and institutions from six to sixteen. See a more detailed analysis here.

In the Center’s first year, IBM announced reduced and variable precision for training and inference processing of deep neural networks. Using lower precision significantly reduces cost and increases performance, both improving as the square of the reduction. For inference processing, IBM demonstrated 2-bit inference with comparable accuracy, realizing a sixteen-fold improvement in processing efficiency. Next on the road ahead, IBM will delve into the world of analog computation, which could yield high performance and low power.

IBM Research also announced its third-generation digital AI core, unveiled at the ISSCC 2021 conference. The new 4-core design increases the performance efficiency for training and inference by sixfold, significantly once again outpacing the goal of 2.5X annual improvements.

Could Analog Computing be the next frontier in AI?

IBM Research has been experimenting with analog computation for decades, and has recently developed a chip using phase-change memory (PCM) to encode the weights of a neural net on a memory device. IBM Research’s continued focus on that technology is evidenced by upcoming highlight papers at this month’s VLSI conference. In another indicator that the analog space is heating up, Austin-startup Mythic has raised an addition $70M in venture capital, totaling $165M to date, for its IPU accelerators built on flash memory devices.

IBM Research has begun to build a developer ecosystem foundation for analog neural network computing with the newly announced AI Hardware Composer tool. The Composer provides access to IBM’s open-source analog libraries with an easy-to-use interface, allowing both novices and experienced developers to tune analog devices to create accurate AI models. AI researchers can test neural network optimization tools to design analog hardware-aware models.

CONCLUSIONS

IBM has attracted a high-caliber roster of research participants to help develop and eventually productize AI technology developed at IBM Research. We believe that IBM is creating an IP portfolio that could impact the industry for years to come while providing the consortium’s membership with advanced and highly differentiated technologies to accelerate AI at the edge and in the data center.