Nvidia focuses on maximum performance and efficiency.

We’ve all heard the predictions: the Next Big Thing will be “Physical AI” (Nvidia) or “Embodied AI” (Qualcomm). Jensen Huang has predicted that in a decade, there will be more robots than humans on Earth. These devices require small, power-efficient, fast processors and sensors on board; the brains and nervous system of the Robot. And these brains must be trained and simulated as well, fueling data center AI demand. (Like many semiconductor companies, Nvidia and Qualcomm are both clients of Cambrian-AI Research.)

Markets and Markets projects that the global intelligent robotics market will reach some $14 billion in 2025 and is projected to grow to about $50 billion by 2030, a compound annual growth rate (CAGR) of 29.2% from 2025 to 2030. And that does not include the data center resources. But if Jenson’s prediction is right, these numbers are way too low.

While Intel and AMD are also in this market, Nvidia and Qualcomm are leading the semiconductor robotic components at this point, with the Jetson and Snapdragon series. I will discuss Nvidia’s new Jetson Thor, based on the Blackwell GPU architecture, in this issue, and will dive into the Qualcomm innovations in an upcoming post.

Nvidia Jetson Thor, Now Shipping

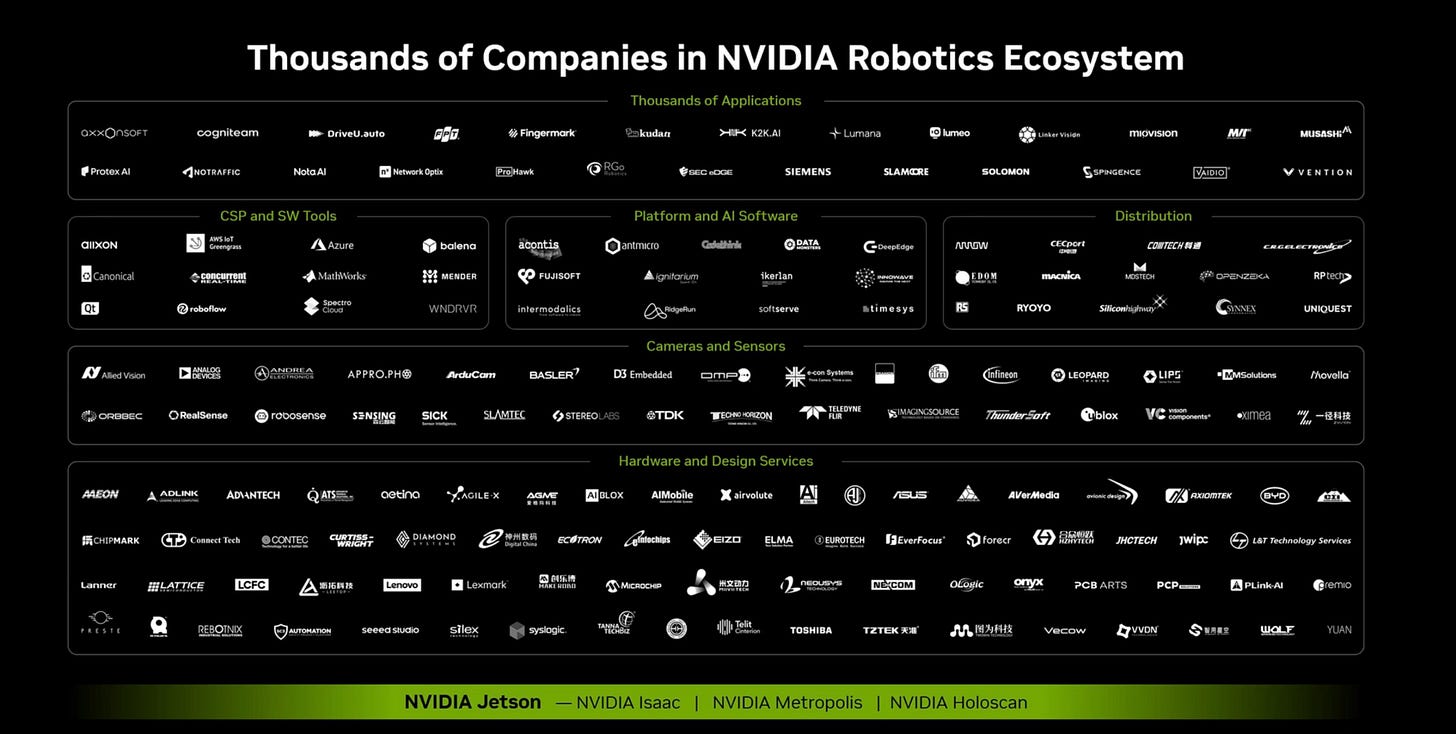

Nvidia has been developing the Jetson family of chips for over a decade, and has attracted a huge ecosystem of hardware and software developers around the world, In fact, Nvidia is the default for most robotics startups and production robots. Rounding out the Blackwell-based GPU family, Thor is now in full production, with leadership performance and the CUDA software stack to create robots, drones, and manufacturing arms for just about every industry.

At Thor’s launch, Nvidia shared a slide that shows the thousands of companies that constitute the Nvidia robotics ecosystem. As you can see below, this market is already in full swing.

Jetson Thor, announced in March at GTC, is a dense System on Chip (SoC) that can process data in 8-bits and now the new NVFP4 (Nvidia Floating Point 4-bits), which Nvidia enabled on the entire Blackwell line last week. With NVFP4 on the faster Blackwell GPU and the speculative decoding we covered in another blog last week, Thor delivers nearly ten times the performance of its Orin predecessor with more memory, faster I/O and a power envelope of up to 130 watts for the entire module, with memory.

Now, 130 watts could be more power than is available for some applications, so Nvidia is also planning a 40W version later this year. But even that level of power is too high for some applications, like small drones, creating an opening for Qualcomm and others which offer lower power (but at lower performance).

But do robots really need 2 petaflops of performance? Nvidia answered this question by showing that Thor is its first robotic platform below 50ms per output token and much lower than the 200ms time-to-first token needed for real-time performance for multimodal AI. And Thor is the first Jetson SoC to support MIG, Nvidia’s Multi-Instance-GPU that enables a GPU to be shared by multiple models simultaneously, ideal for agentic AI.

Key Takeaway

With Thor, Nvidia remains in the leadership performance position for vision-rich and compute-intensive robotics, and should continue to dominate the high end of the robotics market. Nvidia is likely to come out with lower performance and power as they have done with previous Jetsons like Nano. That will help them fend off the lower-power alternatives from Qualcomm and others, but low-power isn’t really Nvidia’s strength; its blazing performance.

This article originally appeared on my Substack. Subscribe for free to receive new posts and support my work.