This startup stands alone with its innovative Wafer-Scale Engine for AI and HPC

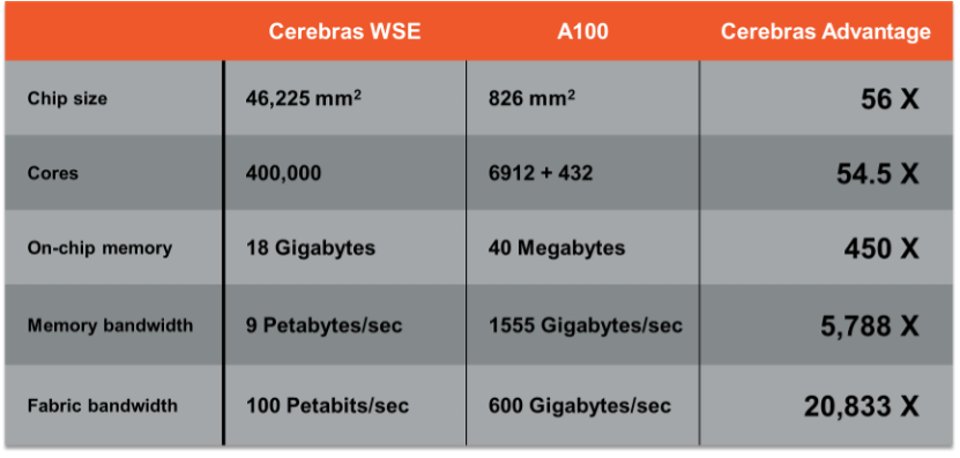

Let’s take an updated look at one of the most interesting AI startups: Cerebras, which came out of stealth in August 2019. The company has an unheard-of aggressive design, using an entire 14nm wafer as a single “chip” along with a custom CS-1 system for power and cooling. The “chip” contains over 400,000 cores, and the system consumes some 20KW of power and has 18 GB of on-die SRAM memory with a 100 Pb/s proprietary fabric. The unique approach has gained the US DOE supercomputing centers’ attention, first at Argonne National Labs, which has purchased at least one of the ~$2M systems.

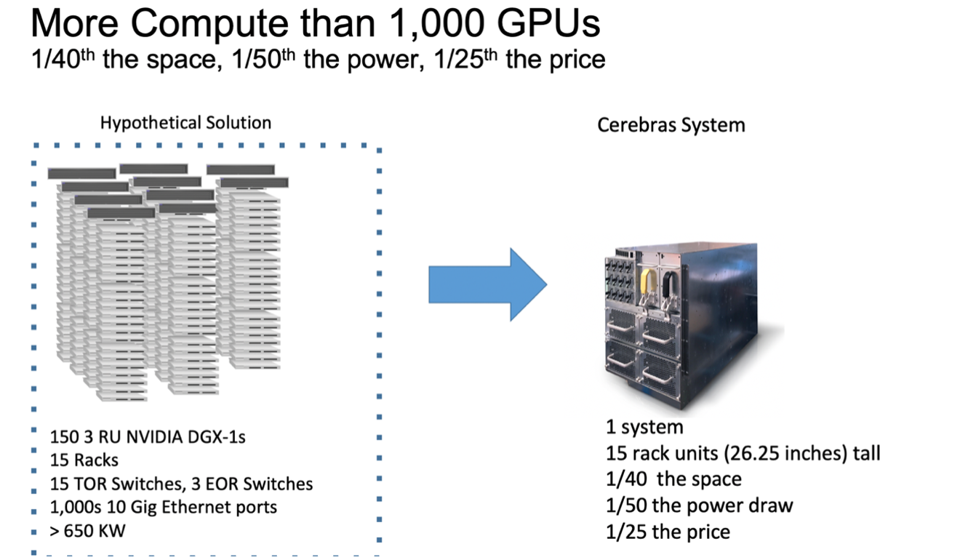

The theory is compelling and straightforward: The CS-1 should be a more efficient accelerator, and it should be easier to deploy a single massive system than on hundreds or thousands of small, distributed servers. That’s the theory. Conversations with the head of Argonne National Laboratory (ANL) indicated that the platform is promising. Since the ANL announcement, Cerebras has announced deployments at Lawrence Livermore National Laboratory (LLNL), Pittsburgh Supercomputing Center, Edinburgh Parallel Computing Centre (EPPC), and GlaxoSmithKline. The latter is significant in terms of commercial software and model development.

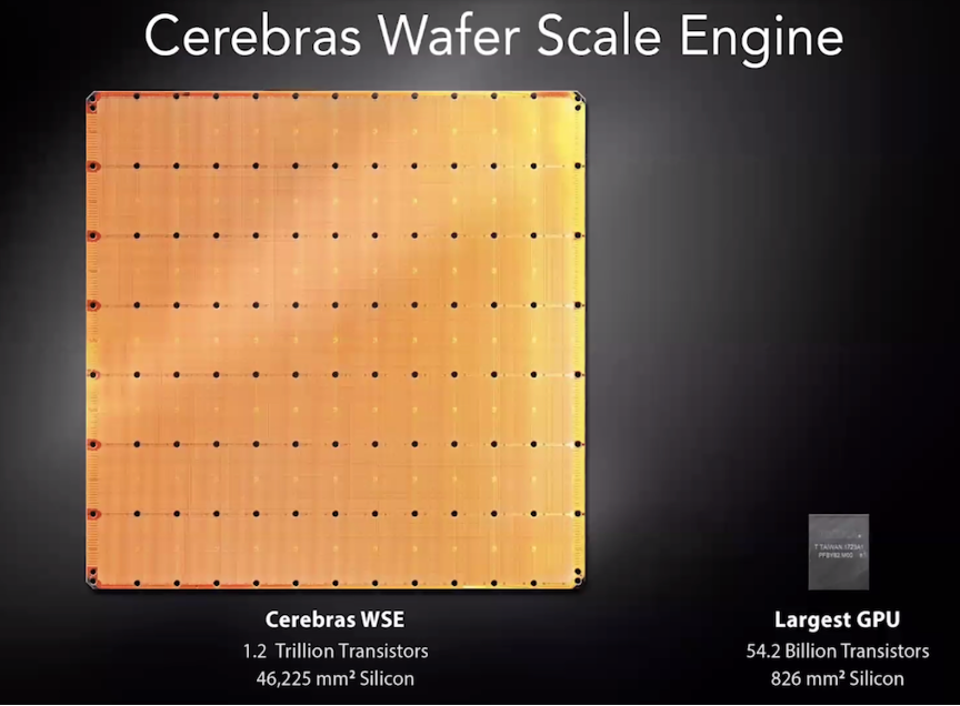

The Cerebras “chip” is composed of interconnected dies on a single wafer, designed by Cerebras and … [+] Cerebras Systems

A single 15U CS-1 system purportedly replaces some 15 racks of servers containing over 1000 GPUs. We won’t even ask about TOPS because the system’s value is in the memory and interconnect, not just the cores. So TOPS are mostly meaningless here.

Cerebras will win any comparison with any other chip since it is composed of eighty chips Cerebras Systems

Last year Cerebras announced a commercial customer, GlaxoSmithKlein, a second DOE installation at Lawrence Livermore National Labs and a multi-system win at Pittsburgh Supercomputing Center. The company recently announced a design win with HPE at EPCC, the supercomputing center at the University of Edinburgh.

Interestingly, Cerebras also announced work with the US Department of Energy’s National Energy Technology Laboratory (NETL), in which the CS-1 set record benchmarks in a non-ML workload. In a computational fluid dynamics model, the type used to model the combustion chamber of a power plant, for example, the CS-1, was more than 200 times faster than the Joule supercomputer housed at NETL.

As CEO Andrew Feldman says, “In AI hardware, you can go high, or you can go low. Anything in between is dead.” We think it is safe to assume that the Cerebras system will remain in a class by itself for the foreseeable future.

The Cerebras CS-1 system could be a dramatic departure for data centers looking for maximum Cerebras Systems

Strengths: The bold wafer-scale design uniquely positions Cerebras as having the highest performing and highest power AI server in the industry. The communication and shared memory benefits of this design should be massive.

Weaknesses: Like all startups, Cerebras success may come down to optimizing software to run across the WSE, particularly the ability to share the wafer amongst secure, private tenants for cloud processing. I would note that early adopters like the DOE can ameliorate this challenge.