Intel has adopted a “Domain-Specific Architecture” strategy espoused by John L. Hennessy, Alphabet Chairman and former President of Stanford University. Consequently, the company has at least one of everything: CPU, GPU, ASICs, and FPGAs. While this may appear to be a strategy of throwing everything against the wall to see what sticks, it may be the epitome implementation of heterogeneous computing. However, this approach places a significant burden on the software developer, which is why Intel has been developing its OneAPI software toolset.

Intel has relied on Xeon for AI inference processing, Movidius for embedded AI, and MobileEye for automotive image processing. For data center processing Intel acquired Nervana Labs in 2016, then Habana Labs in December 2019. Last quarter, Intel was awarded a design win with AWS for training. AWS claimed the Habana Labs platform would deliver a 40% price/performance advantage over a leading GPU, presumably the NVIDIA A100, although that was not explicit. AWS told us that this claim is based on a basket of AI workloads representing 80% of the AI that runs on AWS.

What is Gaudi and is it important to Intel?

The Gaudi chip handles larger memory capacity by exploiting “model parallelism” through the 8x100Gb on-die standard Ethernet fabric that scales out to thousands of nodes, which we covered here. Scale-out will become critical as DNN models continue to grow in size and complexity, doubling every 3.5 months. The Gaudi fabric supports RDMA over converged Ethernet or ROCE. ROCE is a big deal; by putting it on the chip, Intel can now offer eight very fast (100Gb) interconnect ports without an expensive Network Interface Card (NIC) that can cost well over $1000, plus the cost of an expensive (>$10K) top of rack switch. RDMA can significantly simplify the programmer’s challenge of accessing shared memory across the fabric and improve performance compared to using software to share memory that consumes CPU cycles.

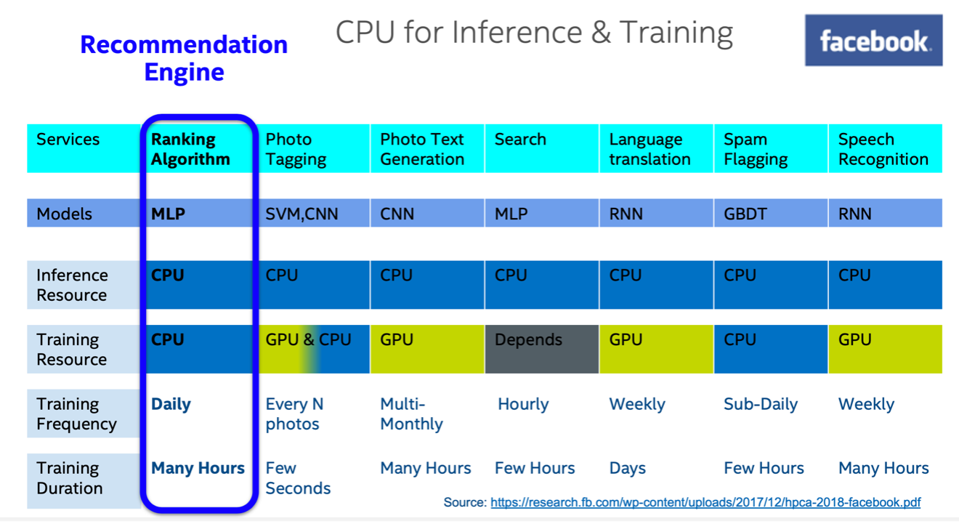

While we are encouraged to see progress on the Habana front, using Intel Xeon for inference and even some training work has its backers as well. Facebook has shared insights into the company’s AI infrastructure, pointing to the massive use of Xeon CPUs for work such as recommendations, a multi-level perceptron, and inference processing across the board.

It is not clear to us that this situation is stable going forward, as Facebook has shown a great deal of interest in other chips, including Qualcomm’s AI100 and an internally developed inference processor, Kings Canyon. Facebook processes over 200 trillion predictions and over 6 billion language translations every day, so an efficient inference processor is critical to Facebook’s operations.

Can people use Xeon for AI?

Facebook AI processes trillions of queries every day. While the company uses GPUs, it also makes … [+] Facebook

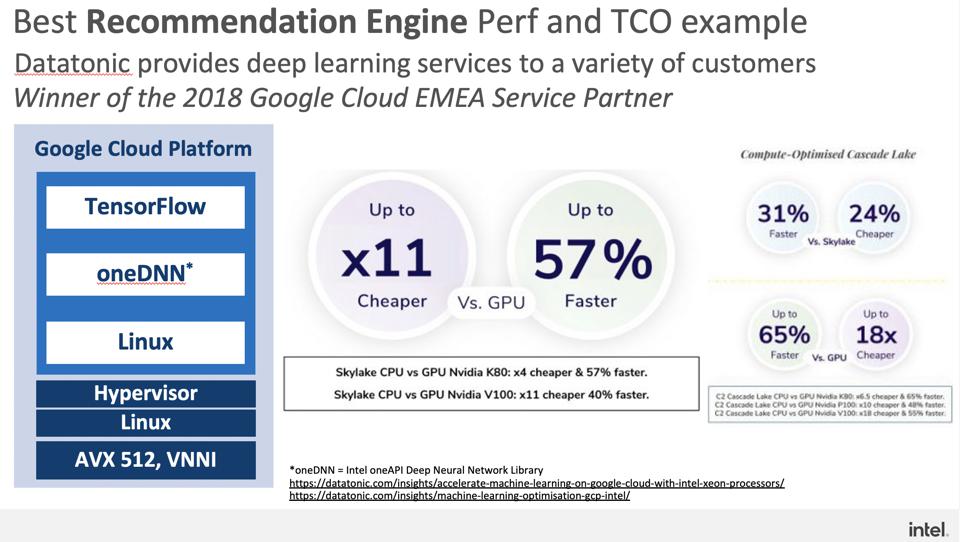

Intel partner, Datatonic, has shared its experience using Xeon for AI to drive the point home, realizing faster inference performance and significant savings. Note that this data compares older NVIDIA GPUs, not the state-of-the-art NVIDIA A100. Nonetheless, we find the assertion impressive and, frankly, surprising.

Intel customer Datatonic shared recently that they have measured significantly faster and less … [+] Intel

What is the future of AI at Intel?

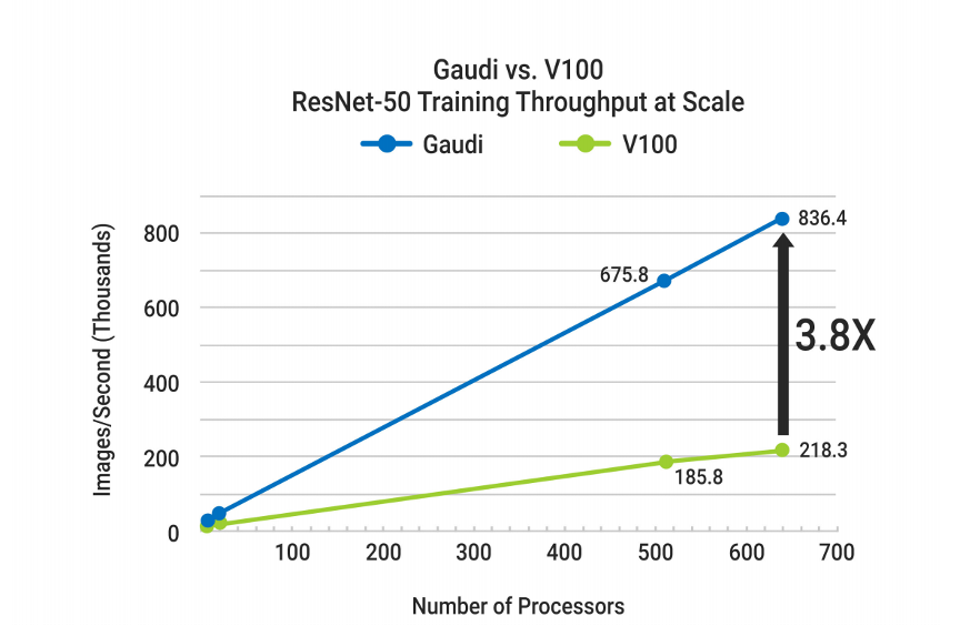

We expect more design wins for the Habana Gaudi training chip in the 1st half of 2021, fueled by the AWS design win. Gaudi has unique advantages in large-scale processing, and we see it as a leading contender for training workloads. The scalability of Gaudi is excellent, which is a key differentiator for Intel.

Intel’s Gaudi platform provides excellent scalability thanks to the on-board 100Gb Ethernet with … [+] Intel

As for the Habana Goya inference processor, we are less confident of design wins going forward for two years old technology. We would not be surprised if Intel decides to shelve Goya, focusing on Xeon for data center inference processing. This could make a lot of sense to us, as Xeon has already received enhancements for AI acceleration, and more are certainly in the works. New CEO, Pat Gelsinger, will face pressure to cut unprofitable products and acquisitions. Goya seems to fit the bill unless, of course, unannounced customer deployments are in place and the next generation chip is impressive.

Strengths: Intel has one of everything, which holds promise if the concept of Domain-specific architectures can overcome the inherent software challenges. Intel Research is a potential strength in AI, as is OneAPI when and if it matures.

Weaknesses: Intel must resolve production problems. It has taken too long to get Habana Gaudi to market, giving competitors time to catch up or surpass Intel. We have not heard of any Habana Goya production installations for inference processing.

Disclosures: This article expresses the opinions of the author, and is not to be taken as advice to purchase from nor invest in the companies mentioned. My firm, Cambrian AI Research, is fortunate to have many, if not most, semiconductor firms as our clients, including NVIDIA, Intel, IBM, Qualcomm, Blaize Graphcore, Synopsys, SimpleMachines, and Tenstorrent. We have no investment positions in any of the companies mentioned in this article. For more information, please visit our website at https://cambrian-AI.com.