The Graphcore disaggregated accelerator could be a game changer.

I have recently finished a research paper looking into the data center architecture for deploying the Graphcore IPU-Machine, which is a network-attached accelerator for highly-parallel workloads. Lets look into what I found, but if you want to see the in-depth report, the paper is freely available on my website here.

The second-generation Intelligence Processing Unit comes in a one-rack-unit (1U) building block, the IPU-M2000, with four IPU accelerators. As we will see, this platform enables a high level of scaling, a large amount of memory for ever-increasing model sizes, and a flexible configuration of CPU servers, storage, and networking. The architecture’s fabric supports low-latency communications between IPUs within a node, within a rack, and across a data center containing hundreds or even thousands of accelerators to handle exponentially increasing AI model complexity. This platform supports a comprehensive software stack to develop and optimize workloads using open-source frameworks and Graphcore-developed tools.

The IPU-Machine Layout

The IPU-Machine consists of four IPUs, a gateway chip, up to 450GB of DRAM, and network connections, along with dual power supplies and an innovative, self-contained liquid cooling system. The idea is simple: interconnect a series of IPU-Machines and dynamically connect them to servers in whatever server/IPU ratio makes sense.

Networking the IPU-Machine

The IPU-M2000 enables communications networking for a scalable fabric of IPU-Machines, storage, and servers. The networking is comprised of two 100Gb Ethernet links to a host server(s), four 512Gb/s IPU-Fabric links (2.8 Tb/s) that interconnect up to 64 IPUs (an IPU-POD64), two 100Gb Ethernet for inter-POD networking, and a “Sync-Link” to initiate the bulk synchronous parallel (BSP) model.

The fabric is designed from the ground-up for AI, using an efficient, low-level point-to-point protocol that is “compiled in,” reducing the overhead of traditional ethernet packets between racks (PODs). The two inter-POD links run this same protocol across racks over Ethernet physical layers. The fabric enables collectives and all-reduce operations that are managed and pre-determined at compile time.

IPU-POD Reference Designs

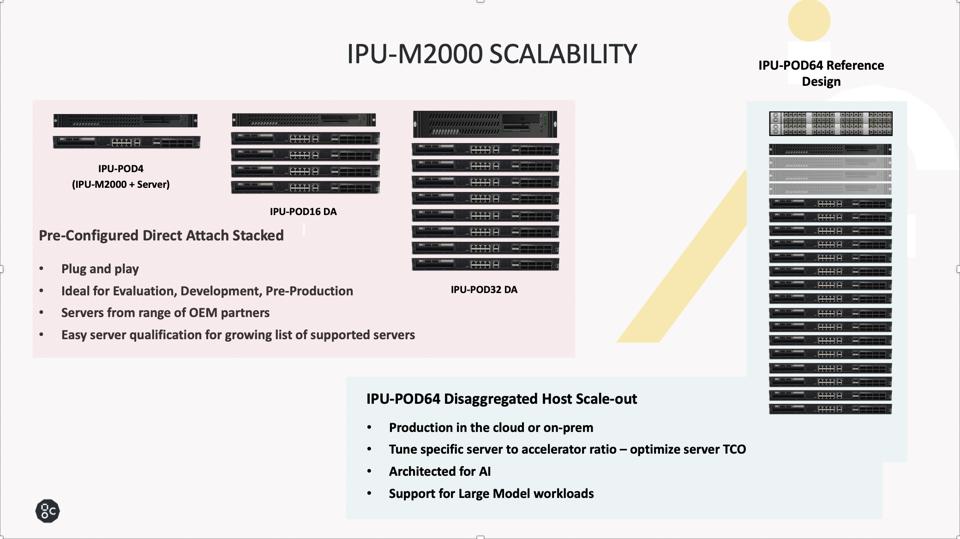

Graphcore realizes that selecting, configuring, and testing a customer data center architecture for the IPU could cost customers precious time and money. Consequently, the team has pre-configured POD reference designs that can easily be acquired and installed with the knowledge and comfort that Graphcore has thoroughly vetted the complete system. The IPU-POD building blocks start small at 4 IPUs (one IPU-Machine), which then simply scales to 8, 16, 32, and 64 IPU clusters with pre-configured, direct-attached networking and a single server. Once one grows to or beyond an IPU-Pod64 design, the flexible number of servers and storage reside in a separate server and storage rack.

The IPU-POD64 offers a rack of IPU Machines, a server, and a Top of Rack Networking switch. Graphcore

Compiled-in networking with the Graphcore Communications Library creates a 16 Peta-Flop compute pool without any internal switches or network management. This approach enables each IPU-M2000 in a rack to address any other IPU in the POD system as a neighbor, offering seamless scaling when performing large group collective functions (All-gather, All-reduce, Scatter).We find this approach to be simple, elegant, and likely very efficient at scale. We hope to see benchmarks soon that would validate the architectural advantages.

The Benefits of Host Disaggregation

The key benefits of this approach are:

1. Optimized performance for models that require more servers than, say, two CPUs for 4 or 8 accelerators,

2. Lower costs for models that require fewer CPU’s by avoiding over-provisioning,

3. A flexible data center infrastructure that can handle both,

4. Servers can reside in utility racks for optimal rack power utilization and serviceability.

Conclusions and Recommendations

The simplicity and elegance of the Graphcore building-block approach are impressive. One can start with a single IPU-Machine, then build up to 16, 32, and 64 PODs as needed. We hope to learn soon how well this architecture supports models running at scale. The realized performance will depend on the Poplar Software stack, especially the compiler’s ability to take advantage of the fabric we have described.

We recommend that any organization that demands AI or other appropriate parallel computing capacities at a significant scale explore this unique approach.