While Gigabyte builds EPYC Server with Qualcomm AI Chips!

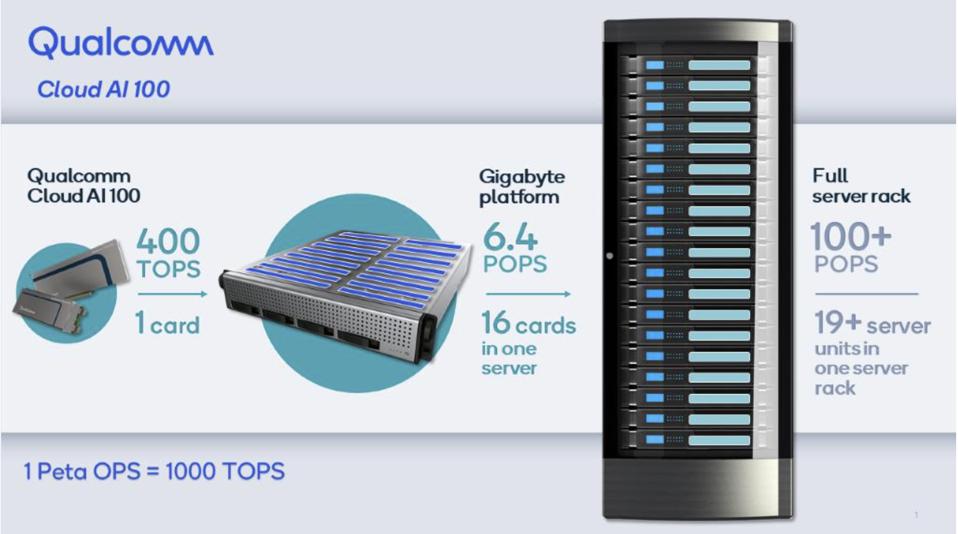

Honestly, I struggled to decide which of these two announcements deserves headline status. Both are pretty exciting. One extends AMD’s leadership over Intel for server CPUs, and the other combines these fast CPU’s with the Qualcomm Cloud A100 accelerators. Since the Gigabyte server is the first product to feature these 400 TOPS inference engines, this is a major milestone. So skip to the end for that, but I will start with the EPYC launch, which is pretty epic in its own right.

What did AMD Announce and how does it compare?

As expected, AMD has announced the third generation of the company’s EPYC server CPUs, claiming double the performance of Intel’s competitive Xeon chips. The new EPYC 7003 increases per-cycle performance over its predecessor by 19%, and doubles the performance for 8-bit integer operations used in AI inference processing. Interestingly, AMD claims that the new CPU offers the world’s fastest performance per chip and per core. The combination of more and faster cores than Intel Xeon, the new EPYC 7003 can deliver twice the performance compared to Intel’s fastest product in cloud, enterprise, and HPC workloads.

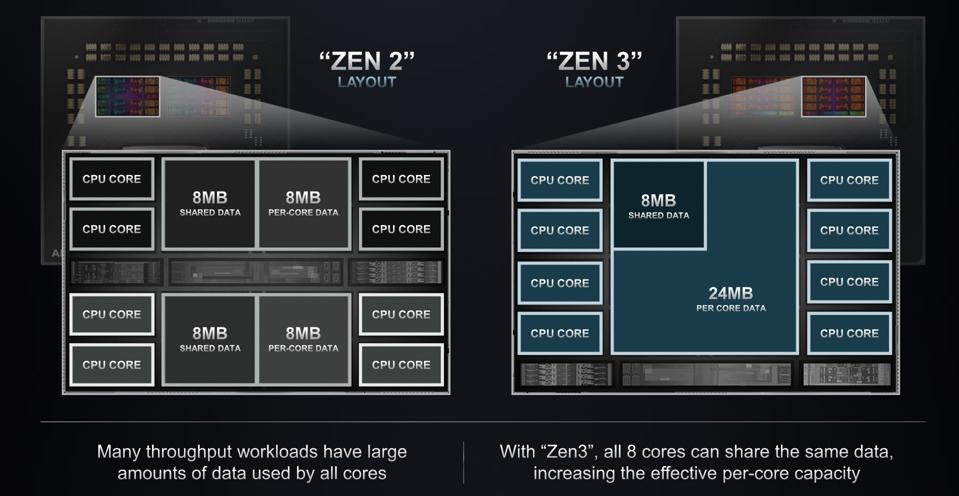

Akin to its predecessor, the 7003 family demonstrates how designers increasingly make use of “chiplets” instead of building a hard-to-produce monolithic massive die. The EPYC 7003 SOC layout is a central hub die connected to four processor dies, each with 16 cores. The hub die supports up to 8 memory channels and the usual I/O. The Zen 3 cores now support 32MB L3 cache which is shared across a 4-core complex, in addition to the L2 cache dedicated to each core.

AMD has significantly enlarged the L3 cache on the new 7103 to 32 MB. AMD

For the first time, AMD has closed a huge AI performance gap that Intel has enjoyed by implementing native 8-bit integer operations, which doubles AI inference processing throughput. If you are a cloud service provider, you had to use Xeons for AI, and AMD for everything else. Thats really awkward for users and CSPs alike. Good move, AMD.

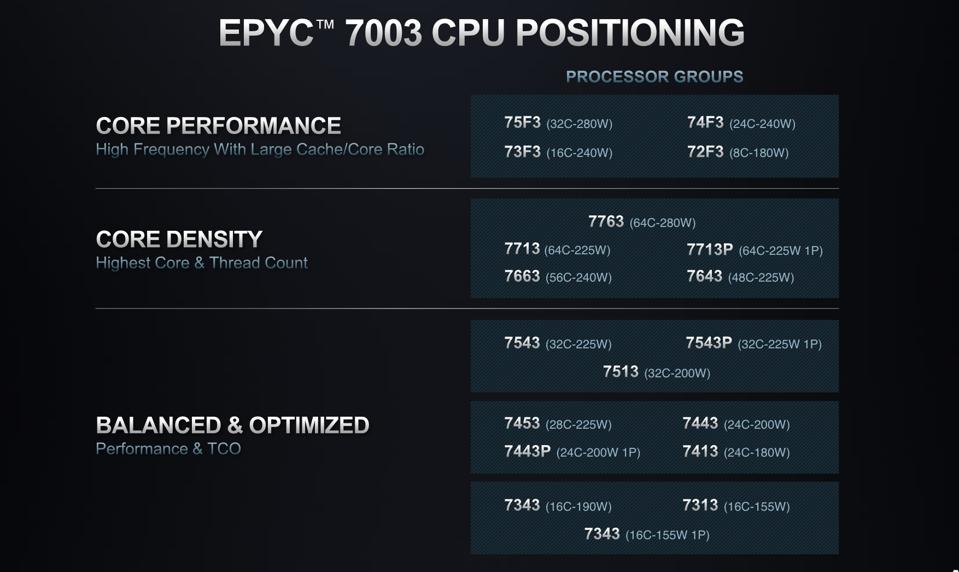

AMD has grouped the EPYC SKUs into three classes: fastest performance per core for workloads like HPC, larges number of cores for maximum Virtual Machines per server, and “Balance & Optimized” for lower core count and cost.

The EPYC 7103 is available in a wide range of SKUs optimized for specific workload requirements. AMD

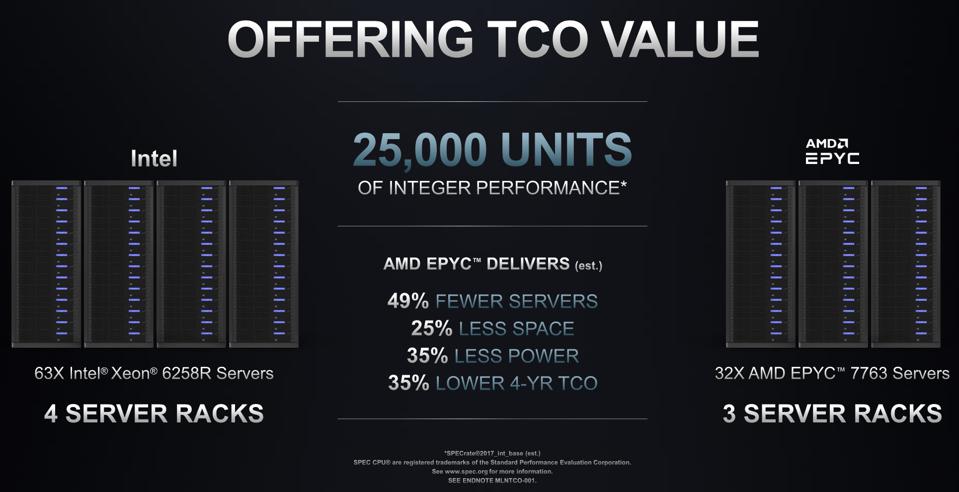

Let’s get back to the largest CPU customers, the CSPs. AMD claims the new EPYC chips can lower Total Cost of Ownership by 35% compared to the competition. That is huge. This advantage should accelerate AMD market share gains over the next several years, until Intel’s new CEO Pat Gelsinger fixes Intel’s manufacturing problem and single-die centric product roadmap. Previously, AMD had touted lower TCO based on having more cores per server, but suffered a per-core absolute performance disadvantage which put off some potential adopters. That deficit is no longer the case.

AMD claims the EPYC 7003 can deliver 25,000 units of integer performance at 35% lower TCO. AMD

And now for the AI surprise!

Concurrent with the EYPC launch, Gigabyte and Qualcomm have announced a new AI server with 2 EPYCs and 16 Cloud AI100’s. Each AI100, as I have often reported, delivers 400 Trillion Operations Per Second (TOPS) sipping only 75 watts of power in a half-height half-length PCIe card. So, 16×400 cards = 6.4 Peta OPS (POPS, or a thousand trillion operations per second) in a single server, and over 100 POPS in a full server rack. This is just crazy performance and power efficiency, some 10 time better than anything out there. In my annual forecast, I predicted that Qualcomm would announce availability and a customer in the 1st half of 2021. One down, 1 to go.

The new Qualcomm Cloud AI100 server from Gigabyte can deliver over 100 PETA-OPS per rack, at far … [+] Qualcomm

Skeptics have pointed out that QTI (Qualcomm’s technology arm) has been talking up the AI100 for almost two years, but the product has yet to be seen in the wild, and no customers have announced their intentions to use this impressive platform. Well, I believe that Gigabyte, a company that caters to the largest cloud service providers, didn’t just decide to spend the money to build and test a new platform on a whim. Somebody large must have said, “Build this, and I will buy some racks”. But the unknown behemoth must still be evaluating the platform, so Gigabyte decided to get some air time for their efforts while Company X (or “F”?) finished their evaluations. Stay tuned!

Conclusions

The AMD EPYC design team is like a metronome, deliver new products that exceed the competition every two years for three generations. Clearly, AMD is on a path to grow its market share significantly, perhaps from some 10% to 20 or 25% over the next two years. Meanwhile, hot AI servers are using these high-performance and low-cost chips to build AI servers, including NVIDIA DGX and now Gigabyte servers.

For those of us who live and breathe AI accelerators, it is refreshing to see the impressive Qualcomm Cloud AI100 being offered in a production server, and possibly soon in a cloud or social media platform “soon”. The Cambrian AI Explosion has transitioned from Powerpoint to Silicon to Solutions!