As a bookend to AMD’s EPYC3 launch earlier this week, I thought I should share my thoughts on the company’s AI chip, the MI100, excerpted and updated from the Q1 Competitive Landscape Report. The company’s new GPU is a solid first effort for AMD’s revived data center line and paves the way for the future, when we will see future EPYC CPUs integrated with future Instinct GPU’s which could be disruptive to the AI Competitive Landscape.

AMD launched a data center GPU in Q4 2020, the Instinct MI100, the first chip using the CDNA GPU architecture for data centers. The new chip delivers excellent double-precision floating-point for HPC but offers middle-of-the-road AI performance with reduced precision formats. I suspect this design reflects the priorities of the supercomputer community which AMD serves. This choice is a reasonable die-area trade-off, given AMD’s two DOE Exa-scale design wins which will use a successor to the MI100. The MI100 chip’s AI performance is totally fine to begin the AI development journey to the Frontier and El Capitan Exascale systems due in 2022. To be built by HPE (Cray), these supercomputers will use future AMD EPYC CPUs and Instinct GPUs, which we expect could provide leadership-class AI and HPC performance.

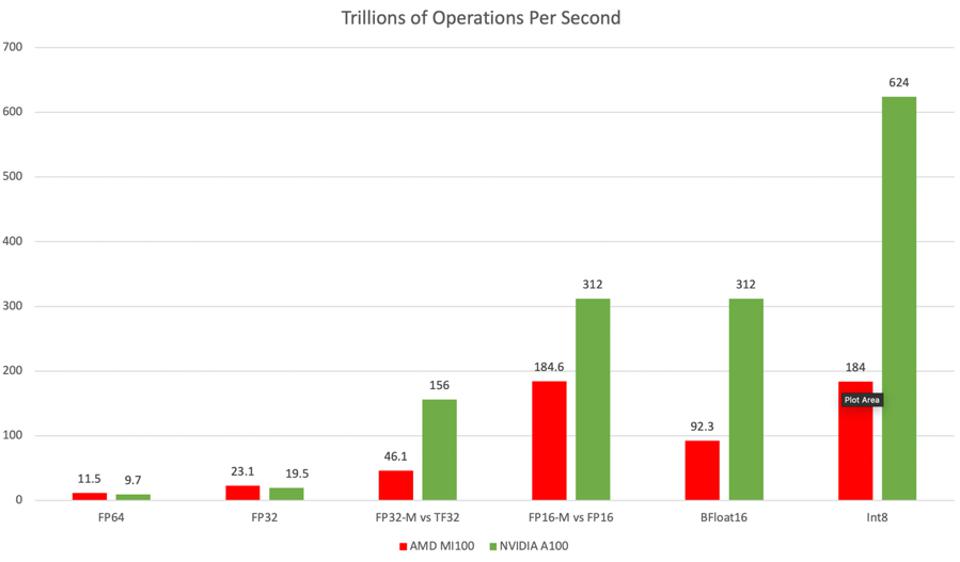

The new AMD GPU for data centers looks great for HPC, and “ok” for AI: Source: NVIDIA, AMD, and author’s analysis

Note that while the performance details in Figure 1 are the only metrics released to-date by AMD, Cambrian-AI Research does not believe that TOPS are a substitute for application performance benchmarks such as MLPerf. But we hope to see more benchmarks soon from AMD.

At the launch, AMD shared a slide that claimed a 50% lower cost per FLOP than the A100 for HPC apps. The HPC performance described above is about 18% faster than NVIDIA, which would imply an aggressive list price of around $7200 by my math, a price that could help AMD get some attention and traction.

ROCM Software

ROCM and the MI100 form a respectable first step for AMD customers to prepare for the future. The new chip is an attractive HPC platform and a respectable chip for AI training workloads. But the challenge any NVIDIA competitor must address lies in the software needed to engender an ecosystem of AI models and applications. AMD has been developing its ROCm open compute offering for over three years. The new V4.0 release has completed the missing elements that can ease porting to AMD from NVIDIA. When combined with the MI100, AMD claims it has delivered an 8X increase in throughput in two years.

AMD shared that customer experience with ROCm has demonstrated the ease of porting. Image: AMD

Conclusions

So, given this analysis, should NVIDIA be worried about AMD’s entry into the data center? In AI, no.In HPC, yes. AMD’s new GPU is an excellent stepping stone to their exascale platform. I expect AMD will be selected by price-sensitive supercomputer installations which may not see a tremendous need for bleeding-edge AI on the same platform in the short term. However, many or even most HPC users see AI as an integral part of the workflow these days. I will anxiously await application performance data such as mlPerf for AI to determine AMD’s competitive strength.

In the longer term, improvements in AI performance and a tighter interconnect with EPYC Rome using the Infinity Fabric could make AMD a significant competitive force. I have previously opined that this approach is one reason why NVIDIA needs to develop a data center-class CPU using arm.

Meanwhile, the Frontier and El Capitan applications’ ecosystem now has an affordable on-ramp to AMD experimentation and support, especially if Cray creates a MI100/EPYC Rome platform in 2021.

Strengths: Dependable design and product cycle time. EPYC CPU performance leadership. Also, AMD has built good relationships with the US Government, as well as major cloud and system vendors with the EPYC and Ryzen products, which AMD can leverage. Also, the DOE Exascale wins create demand for an on-ramp of AMD technology, which the MI100 provides.

Weaknesses: The AMD software stack for AI remains immature, and the MI100 AI performance may not create sufficient pull to change that in the next 18 months.

Disclosures: This article expresses the opinions of the author, and is not to be taken as advice to purchase from nor invest in the companies mentioned. My firm, Cambrian AI Research, is fortunate to have many, if not most, semiconductor firms as our clients, including NVIDIA, Intel, IBM, Qualcomm, Blaize, Graphcore, Synopsys and Tenstorrent . We have no investment positions in any of the companies mentioned in this article. For more information, please visit our website at https://cambrian-AI.com.