Can the mobile chip giant finally break into the data center?

Qualcomm began sampling the Cloud AI100 to select data center customers last quarter. The performance looks to be promising, based on the limited benchmarks we have seen, and the power efficiency for inference processing looks world-class. The new platform delivers up to 400 Trillion Ops Per Second (8-bit TOPS) per chip but consumes only 75 Watts. A mid-range version cranks out 8 TOPS/Watt, an industry-leading mark, although we have repeatedly seen that TOPS does not directly translate to anything but, well, TOPS. The company has shared only a few application benchmarks, and so we anxiously await more data as early clients experiment with the platform. If Qualcomm can announce large customer wins in the next six months, we believe the company will become a significant force in the market for data center AI acceleration.

Performance and Power

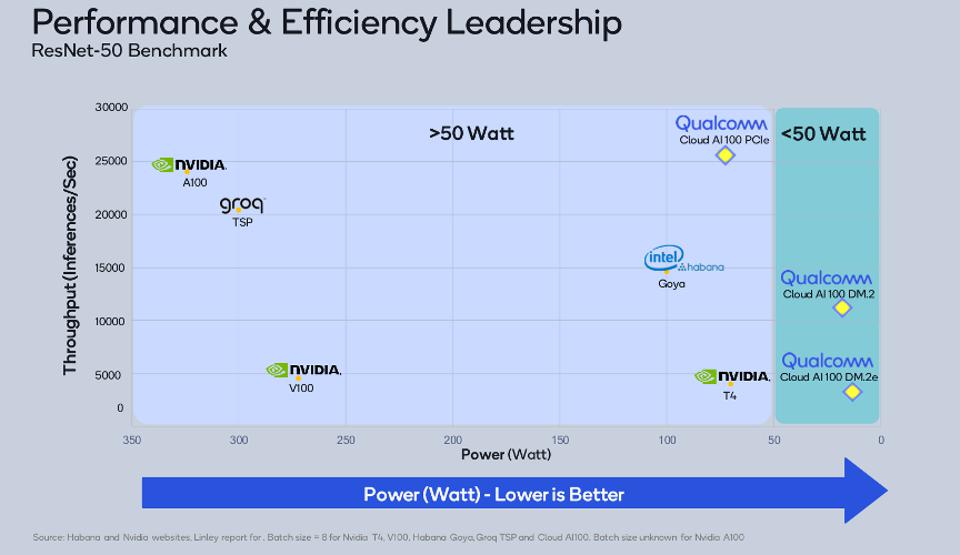

Qualcomm CloudAI100 models are all fast and extremely efficient inference platforms. Source: Qualcomm Technology Inc.

The figure above shows the relative performance and power of the three Cloud AI100 SKUs versus NVIDIA, Groq, and Intel Habana Labs. We would point out that ResNet-50 is a small benchmark by today’s standards, especially when compared to NLP models like Google’s BERT and OpenAI’s GPT-3, which demand significant memory capacity. But this initial data shows an impressive result: The 20-watt Cloud AI 100 M2 delivers roughly 10 times the performance as the 2-year-old NVIDIA T4 and consumes less than a third as much power. Intel Goya is four times slower and requires five times more energy. We anxiously await performance data with larger models, such as the MLPerf benchmark suite, especially for DLRM, as the recommendation market is wide open to new competitors. We may find the initial design to have inadequate memory capacity for the newer larger models being deployed.

Software for AI

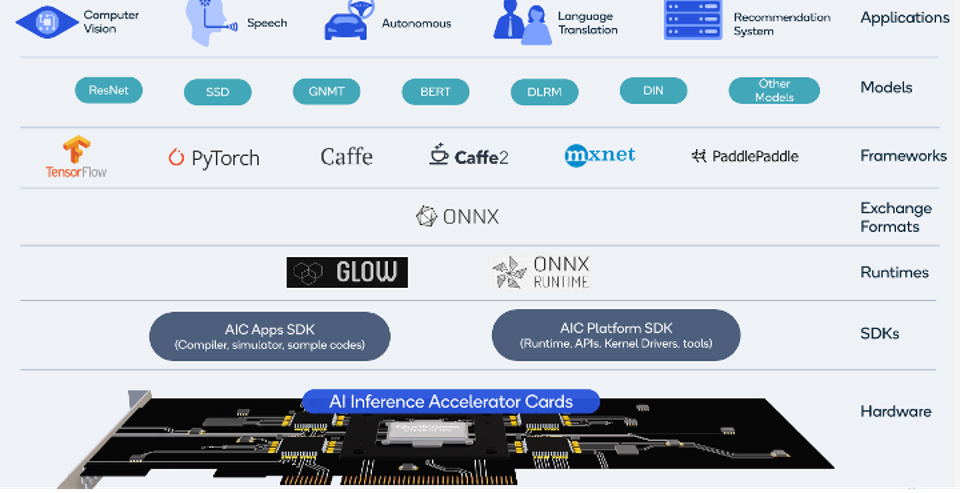

Most AI silicon challengers fail to provide a complete optimized development environment concurrent with their first production chips launch. Qualcomm is a notable exception, having spent years building software and models for the Snapdragon AI Engine. We would assert that QUALCOMM’s AI software story is one of the industry’s most comprehensive, trailing only NVIDIA in breadth and depth. The figure below shows that the stack now includes two software development kits (SDKs) explicitly targeting the Cloud AI100: an AIC Apps SDK, which provides an optimizing compiler, and an AIC Platform SDK containing the requisite runtime libraries, APIs, kernels and tools. A natural language model, BERT, and recommendation engine, DLRM, are also new additions, likely indicators of early customer evaluations now underway for the platform.

Qualcomm offers a comprehensive development stack for AI. Source: Qualcomm Technology Inc.

Should the specs be equal, we believe that many customers would choose to do business with an established semiconductor company like NVIDIA, Intel, or Qualcomm over a startup. Qualcomm provides rock-solid quality, performance, efficiency, and support, as well as a comprehensive software ecosystem for AI inference processing, born from years of experience with Snapdragon. It’s a powerful combination, and we look forward to seeing more benchmarks and customer testimonials.

Strengths: A track record of power-efficient chips, with a legacy of AI on Snapdragon. Large, dependable semiconductor supplier.

Weaknesses: Qualcomm has yet to produce the range of benchmarks that would portray the company’s potential, nor any customer design wins.