Qualcomm software supports multiple hardware accelerators in both Snapdragon and Cloud implementations, while making it easy for developers.

We have taken a deeper look into the software that makes Snapdragon the best AI for mobile devices and enables the Cloud AI100 to deliver the best power efficiency in the MLPerf inference benchmarks, and have published a brief Research Note here. But if you are tight for time, here’s the punchlines!

Introduction

Qualcomm has been developing AI hardware and software for nearly a decade and has recently expanded from the company’s mobile chip space Snapdragon processors to enter the data center market with the Cloud AI100 platform. Starting from a collection of tools specific to various logic blocks such as CPU, GPU, and Hexagon processor, the company’s AI software has now matured into an integrated stack for a fused AI hardware platform.

Efficiency For developers of edge and datacenter AI

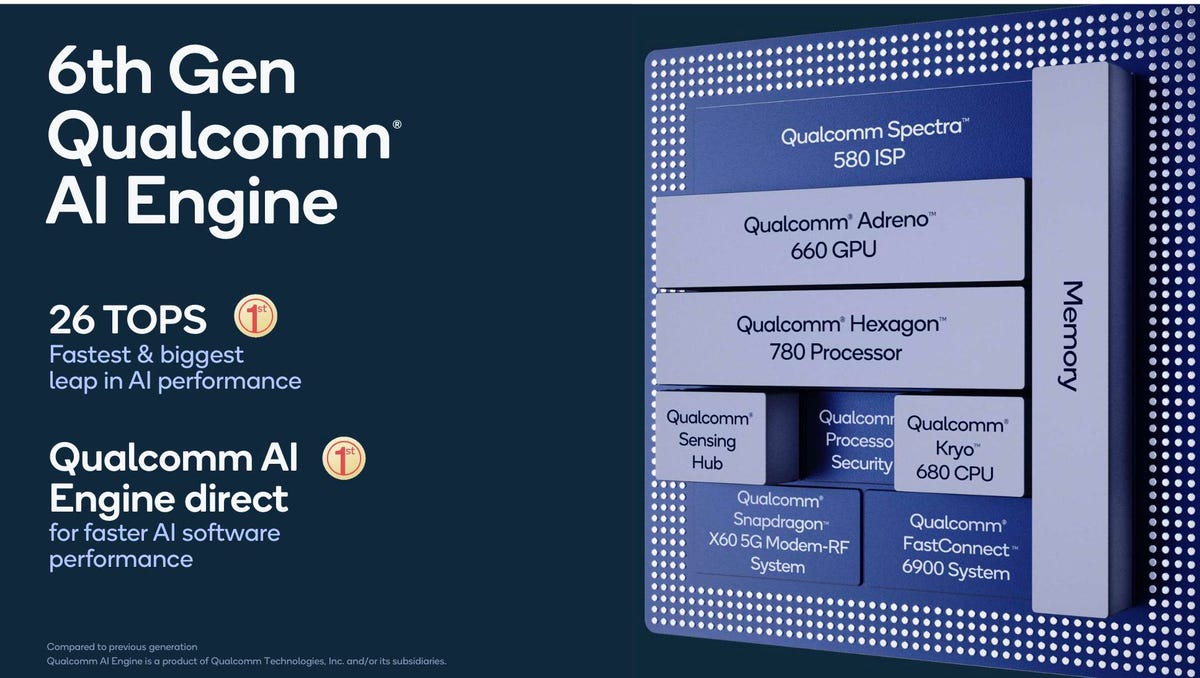

The newest Snapdragon 888 5G Mobile Platform features the company’s 6th generation AI engine, a completely re-engineered Qualcomm Hexagon 780 Processor that features a fused AI-accelerator architecture which brings the total Qualcomm AI Engine performance up to 26 TOPS, at 3X performance-per-watt improvement and 16X larger shared AI memory. The Qualcomm Cloud AI100 took that power efficiency to the data center, as evidenced by the most recent MLPerf inference benchmark results, wherein Qualcomm bested all submission by over 3X in performance/watt. Clearly, the Qualcomm development team takes a comprehensive system-level view of performance and power efficiency.

Let’s begin with the Qualcomm Neural Processing SDK, designed to help developers run one or more neural network models on Snapdragon mobile platforms, whether it is to run on the CPU, GPU or Hexagon processor. The SDK provides a high-level pipeline for machine learning, including everything a developer needs to go from a trained DNN model to an optimized network for inference processing.

The Qualcomm Software stack takes advantage of CPU, CPU, and Hexagon processor performance. Qualcomm Technologies Inc.

In addition to Qualcomm Neural Processing SDK, Qualcomm AI Engine direct, announced together with the new fused AI accelerator architecture on the Hexagon 780 processor, provides developers with access directly to the hardware, and not only for the Hexagon 780 processor, but also for the Adreno GPU and Kryo CPU. The Qualcomm AI Engine direct now has a unified AI API across the whole Snapdragon platform. In addition, this API is backward compatible and available on the previous 5th generation Qualcomm AI Engine. A developer or OEM can take advantage of this solution across Snapdragon platforms and leverage both the 5th and 6th generation AI Engine. QTI is focused on modularity and extensibility – expanding on user-defined operator concept to bring new capabilities for developers to create their own AI solutions, accelerated on Snapdragon.

In addition, developers can also use the open-source AI Model Efficiency Toolkit (AIMET), which provides advanced model quantization and compression techniques for trained neural network models. Obviously, reducing the size and precision of a neural network readies the application to run efficiently on a low-power, smaller mobile device. But the AIMET can also improve performance and power efficiency of running them on the larger CloudAI100 platform.

For autonomous vehicle functionality, the Qualcomm solution is the Qualcomm Snapdragon Ride Platform. Licensed developers can use it to develop solutions for the Qualcomm Autonomy and Advanced Driver Assistance (ADAS) SDK, a C++ API containing numerous classes and methods for performing image processing functions specific to automotive.

And for robotic developers, the Qualcomm Robotics RB5 Platform offers a next-generation, solution that can be used to develop high-compute, artificial intelligence (AI)-enabled, low-power robots and drones for consumer, enterprise, defense, industrial, and professional service applications. Built around the Qualcomm QRB5165 processor, the Qualcomm Robotics RB5 platform includes many of the features found in the Snapdragon 865 Mobile Platform including its Hexagon Processor with specialized compute capabilities for AI, and 5G connectivity. This makes the platform well suited for adding AI operations such as on-device intelligence and computer vision algorithms to robotic and drone projects.

Conclusions

The Qualcomm Snapdragon and now the Cloud AI100 form one of the most widely deployed AI acceleration platforms in the industry in terms of volume. Consequently, developers need access to the tool chains that make it easy to develop, optimize, and deploy on these chipsets. The portfolio of tools we have investigated appear to make it easy to port existing AI models, and optimize those models to run as fast and efficiently as possible. Providing a unified development stack for both Mobile, Edge, and Cloud should also enable Qualcomm ecosystem partners to extend their applications from any deployment domain to another.

Combining the AI software stack with the portfolio of power-efficient Snapdragon and Cloud processors should help Qualcomm continue to lead the AI market in mobile, edge, and now establish a position in the cloud.